Execute a command as an ephemeral docker swarm service. Regarding pull image part of the code, I think it is fairly short and straightforward to remain inside execute (Ill add a comment to it though). class .DockerSwarmOperator(image, args, kwargs)source ¶.

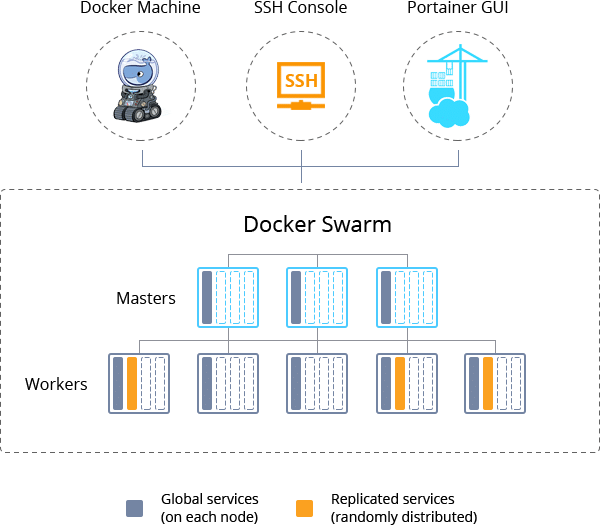

Other have reported success in the past using Docker Swarm Operator. mik-laj I see your point regarding execute.I think execute is actually doing the things a runimage method should be doing and hence it makes sense to rename it to something like runimage. I was expecting the container to be created and be alive for 60 seconds, exit with code=0 after that. Thanks Server: Oracle Linux 9 Airflow 2.4. apache-airflow-providers-microsoft-azure. Once the container finished execution with no errors (DESIRED STATE: Shutdown), the task in Airflow keeps in 'Running' state forever. Oracle to Microsoft Azure Data Lake Storage. Example use-case - Using Docker Swarm orchestration to make one-time scripts highly available. With retries', default_args=DEFAULT_ARGS, schedule_interval='0 * * * Mon-Sun', start_date=datetime(2019, 7, 23), max_active_runs=1, catchup=False) as twenty_four_by_seven_dag:ĭocker_url="unix://var/run/docker-sysavtbuild.sock", I have a task in airflow that uses a DockerSwarmOperator to run a short-lived container. class DockerSwarmOperator (DockerOperator): ''' Execute a command as an ephemeral docker swarm service. No Proxy: localhost,127.0.0.1.comĬreated the following DAG to schedule a one time shot job: from datetime import timeįrom _swarm_operator import DockerSwarmOperator Operating System: Fedora 29 (Twenty Nine) Runc version: dc9208a3303feef5b3839f4323d9beb36df0a9dd class .dockerswarm.DockerSwarmOperator(, image: str, enablelogging: bool True, configs: OptionalListtypes. Log: awslogs fluentd gcplogs gelf journald json-file local logentries splunk syslogĬontainerd version: 7ad184331fa3e55e52b890ea95e65ba581ae3429 Network: bridge host ipvlan macvlan null overlay I found this diagram of the CeleryExecutor architecture to be very helpful to sort things out.

Python 3.7.2 (default, Jan 16 2019, 19:49:22) Far from being the 'ultimate set-up', these are some settings that worked for me using the docker-compose from Airflow in the core node and the workers: Main node: The worker nodes have to be reachable from the main node where the Webserver runs. HP ProLiant DL560 Gen8, BIOS P77, 64 cpus Bases: Execute a command as an ephemeral docker swarm service. Cloud provider or hardware configuration: Module Contents¶ class .DockerSwarmOperator (image, args, kwargs) source ¶.This will give us access to the bash running in the container: airflow-on-docker-compose git: (master) docker-compose run webserver bash Starting airflow-on-docker-composepostgres1. Kubernetes version (if you are using kubernetes) (use kubectl version): In order to run the tests in the environment we can just run: docker-compose run webserver bash.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed